Model Context Protocol (MCP): Eliminate Context-Switching for Developers

Mastering MCP: How to Kill Context-Switching for Good

🔑 Key Takeaways

- Model Context Protocol (MCP) connects your AI directly to your real tools and data

- MCP servers eliminate constant tab-switching between logs, dashboards, and terminals

- Stripe AI integration becomes dramatically more powerful when live data is accessible

- AI debugging tools work best when grounded in real-time context

- Case Study: The Stripe Debugger — Richard uses an MCP server to pull live webhook data and subscription changes directly into his terminal

How Much Time Are You Losing to Tab-Hopping?

Be honest.

When something breaks in production, your workflow probably looks like this:

- Check the logs

- Open Stripe

- Cross-reference GitHub

- Inspect the database

- Ask your AI assistant

- Copy-paste context

- Repeat

You’re not debugging.

You’re context-switching.

And context-switching is silent productivity debt.

For developers trying to move fast — especially in SaaS environments — this friction compounds daily. It slows incident response. It delays releases. It fragments thinking.

And the worst part?

Your AI assistant could help… but it’s blind.

The Core Problem: Your AI Has No Access to Reality

Most AI debugging tools operate in isolation.

They see:

- Your pasted logs

- Snippets of stack traces

- Partial error messages

They don’t see:

- Live webhook payloads

- Real subscription state

- Database rows

- Git history

Without real-time context, AI can only speculate.

This leads to:

- Generic debugging advice

- Wrong assumptions

- Repeated clarifications

- Wasted cycles

If you ignore this gap, you’ll keep treating AI like a smart autocomplete instead of a true development partner.

Enter Model Context Protocol (MCP)

The :contentReference[oaicite:0]{index=0} changes the game.

Instead of pasting context into AI, MCP allows your AI to query tools directly via structured servers.

Think of it as:

A secure bridge between your AI and your tech stack.

Using MCP servers, your assistant can access:

- Payment provider APIs

- Git repositories

- Database records

- Logs

- Monitoring tools

In real time.

No copy-paste required.

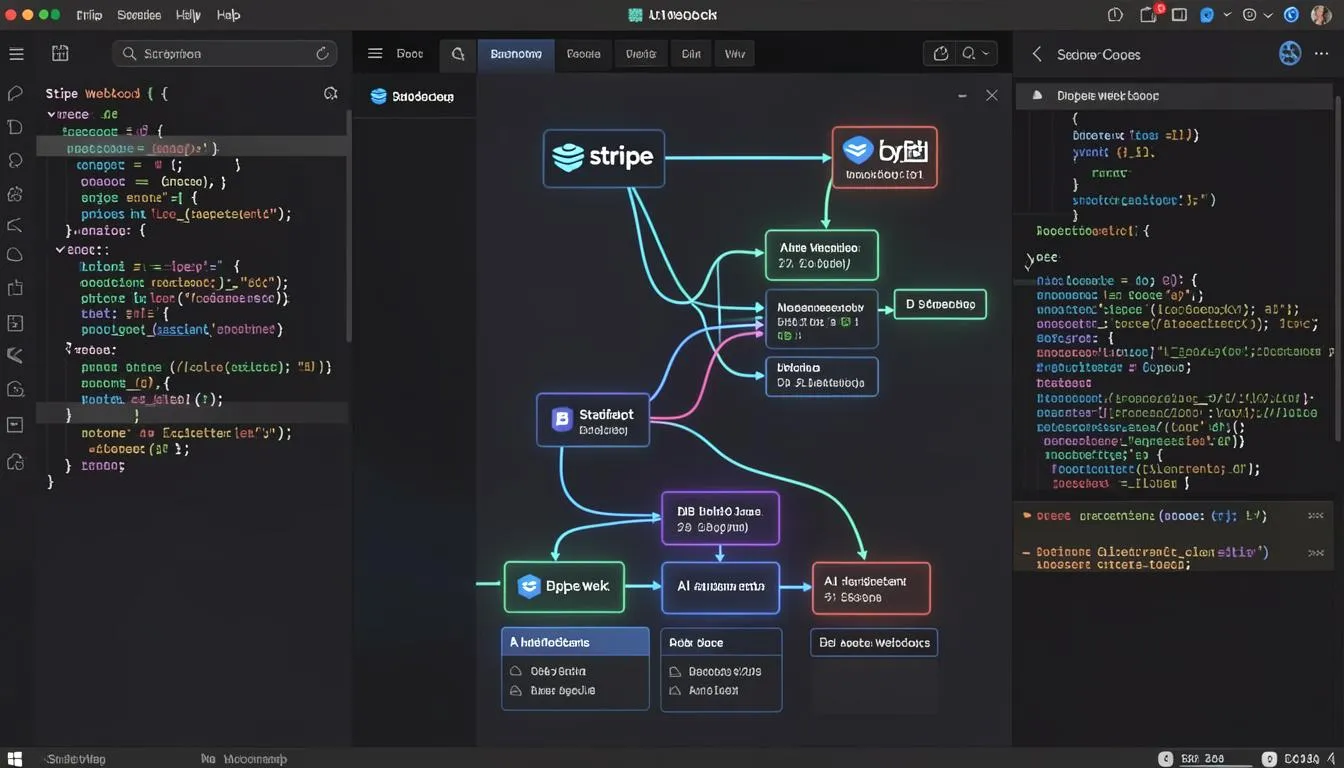

Case Study: The Stripe Debugger

Richard, a SaaS founder, built a Stripe debugging workflow using MCP.

Instead of manually:

- Logging into Stripe

- Filtering webhooks

- Checking subscription states

- Comparing event timestamps

He configured an MCP server connected to :contentReference[oaicite:1]{index=1}.

Now, inside his terminal session, he can say:

“Check the last 5 webhook events for user ID 8392 and summarize subscription changes.”

The AI fetches:

- Live webhook payloads

- Subscription status

- Payment intent history

Directly.

This Stripe AI integration eliminated 70% of his manual inspection steps.

Faster debugging.

Cleaner mental state.

Better developer productivity.

Why MCP Servers Kill Context-Switching

Let’s break this down practically.

1. AI Moves From Advisor to Operator

Without MCP:

- AI suggests what you might check.

With MCP:

- AI actually checks it.

This is the difference between theoretical reasoning and grounded reasoning.

2. Debugging Becomes Conversational

Instead of digging through dashboards, you can:

- Ask follow-up queries

- Filter logs dynamically

- Compare environments

- Generate summaries

All inside your terminal or IDE.

AI debugging tools become interactive investigators.

3. Reduced Cognitive Fragmentation

Every tab switch costs mental energy.

When your workflow stays inside:

- Terminal

- IDE

- One conversation

You maintain flow state.

Flow is where productivity lives.

How to Implement MCP in Your Stack

Here’s a simple blueprint.

Step 1: Identify High-Friction Tools

Start with tools where you constantly switch contexts:

- Stripe

- GitHub

- Database dashboards

- Logging systems

For Git workflows, connecting MCP to :contentReference[oaicite:2]{index=2} allows AI to inspect PRs, diffs, and commit history directly.

Step 2: Deploy MCP Servers Securely

MCP servers act as structured connectors.

Best practices:

- Use scoped API keys

- Implement read-only permissions first

- Log AI queries

- Monitor access patterns

Security is critical. AI access should be intentional, not unrestricted.

Step 3: Define Clear Tool Interfaces

Don’t expose everything blindly.

Instead:

- Create specific endpoints (e.g., fetchRecentWebhooks)

- Standardize response formats

- Add validation layers

This makes the AI’s reasoning cleaner and safer.

Step 4: Optimize for Developer Productivity

The goal isn’t novelty.

It’s speed.

Measure:

- Time to diagnose incident

- Number of manual steps eliminated

- Reduction in dashboard switching

If you’re exploring broader AI agent adoption strategies, SaaSNext provides in-depth breakdowns on integrating AI into operational workflows: 👉 https://saasnext.in/

They focus on turning AI from a chat tool into a production-grade system — a philosophy that aligns perfectly with MCP adoption.

MCP and the Future of AI Debugging Tools

This isn’t just about Stripe.

Imagine AI that can:

- Pull DB rows for a failing query

- Compare staging vs production configs

- Analyze recent deployments

- Cross-reference logs with commits

That’s where Model Context Protocol shines.

Instead of guessing, your AI works with evidence.

In advanced automation environments — including those discussed by SaaSNext — MCP-style architectures are becoming foundational to agentic workflows.

Because true AI productivity comes from integration, not isolation.

Common Questions (AEO Optimized)

What is Model Context Protocol?

Model Context Protocol (MCP) is a framework that allows AI systems to securely access and query real-world tools and APIs via structured servers.

How does MCP improve developer productivity?

It eliminates context-switching by allowing AI to fetch logs, API data, and repository information directly within your workflow.

Is Stripe AI integration safe?

Yes, if implemented with scoped API keys, read-only access, and monitoring controls.

Are MCP servers hard to build?

Not necessarily. Many can be lightweight wrappers around existing APIs with defined query schemas.

The Bigger Insight: Context Is the Real Multiplier

AI doesn’t need more intelligence.

It needs more context.

When you connect it directly to your systems:

- Answers become specific

- Debugging becomes faster

- Workflow becomes seamless

You stop treating AI as an assistant.

And start treating it as a collaborator.

Stop Switching Tabs. Start Switching Architecture.

Context-switching is a tax you’ve normalized.

MCP removes it.

By implementing Model Context Protocol and connecting your AI to tools like Stripe and GitHub, you eliminate the copy-paste cycle and unlock real developer productivity gains.

If you’re serious about modern AI debugging tools, start with one MCP server.

One integration. One workflow.

Then expand.

And if you want structured guidance on deploying AI agents inside real SaaS systems, explore SaaSNext’s resources — they’re built for teams ready to move beyond chat.

Because the future of development isn’t more tabs.

It’s less friction.